A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTCPU Tests: Rendering

Rendering tests, compared to others, are often a little more simple to digest and automate. All the tests put out some sort of score or time, usually in an obtainable way that makes it fairly easy to extract. These tests are some of the most strenuous in our list, due to the highly threaded nature of rendering and ray-tracing, and can draw a lot of power. If a system is not properly configured to deal with the thermal requirements of the processor, the rendering benchmarks is where it would show most easily as the frequency drops over a sustained period of time. Most benchmarks in this case are re-run several times, and the key to this is having an appropriate idle/wait time between benchmarks to allow for temperatures to normalize from the last test.

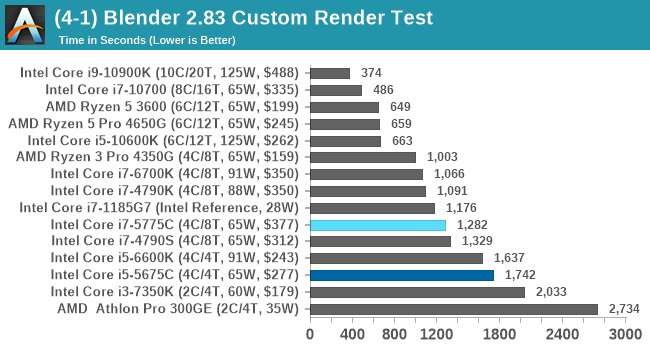

Blender 2.83 LTS: Link

One of the popular tools for rendering is Blender, with it being a public open source project that anyone in the animation industry can get involved in. This extends to conferences, use in films and VR, with a dedicated Blender Institute, and everything you might expect from a professional software package (except perhaps a professional grade support package). With it being open-source, studios can customize it in as many ways as they need to get the results they require. It ends up being a big optimization target for both Intel and AMD in this regard.

For benchmarking purposes, we fell back to one rendering a frame from a detailed project. Most reviews, as we have done in the past, focus on one of the classic Blender renders, known as BMW_27. It can take anywhere from a few minutes to almost an hour on a regular system. However now that Blender has moved onto a Long Term Support model (LTS) with the latest 2.83 release, we decided to go for something different.

We use this scene, called PartyTug at 6AM by Ian Hubert, which is the official image of Blender 2.83. It is 44.3 MB in size, and uses some of the more modern compute properties of Blender. As it is more complex than the BMW scene, but uses different aspects of the compute model, time to process is roughly similar to before. We loop the scene for at least 10 minutes, taking the average time of the completions taken. Blender offers a command-line tool for batch commands, and we redirect the output into a text file.

Blender is more performance oriented, so we see a more standard performance profile. The Broadwell performs akin to Tiger Lake here only by virtue of the 28W sustained power limit on Tiger Lake.

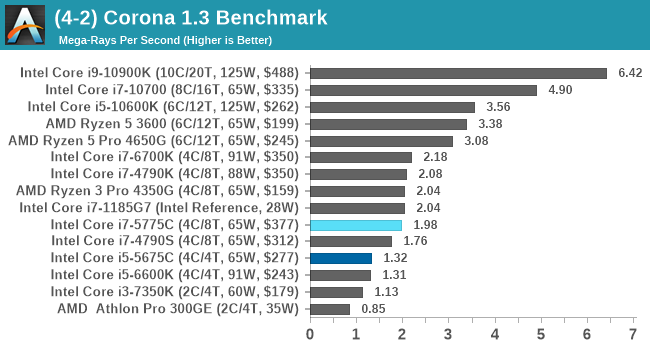

Corona 1.3: Link

Corona is billed as a popular high-performance photorealistic rendering engine for 3ds Max, with development for Cinema 4D support as well. In order to promote the software, the developers produced a downloadable benchmark on the 1.3 version of the software, with a ray-traced scene involving a military vehicle and a lot of foliage. The software does multiple passes, calculating the scene, geometry, preconditioning and rendering, with performance measured in the time to finish the benchmark (the official metric used on their website) or in rays per second (the metric we use to offer a more linear scale).

The standard benchmark provided by Corona is interface driven: the scene is calculated and displayed in front of the user, with the ability to upload the result to their online database. We got in contact with the developers, who provided us with a non-interface version that allowed for command-line entry and retrieval of the results very easily. We loop around the benchmark five times, waiting 60 seconds between each, and taking an overall average. The time to run this benchmark can be around 10 minutes on a Core i9, up to over an hour on a quad-core 2014 AMD processor or dual-core Pentium.

Similarly, Corona has no need for the eDRAM.

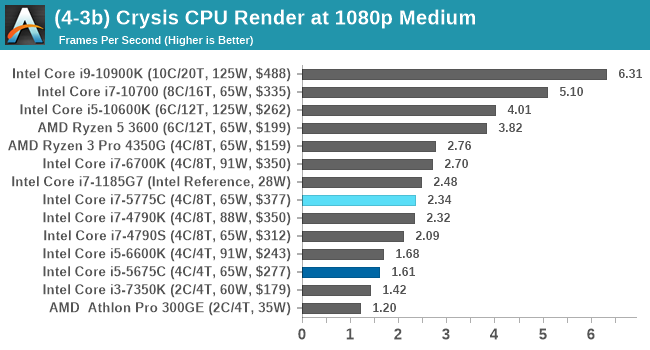

Crysis CPU-Only Gameplay

One of the most oft used memes in computer gaming is ‘Can It Run Crysis?’. The original 2007 game, built in the Crytek engine by Crytek, was heralded as a computationally complex title for the hardware at the time and several years after, suggesting that a user needed graphics hardware from the future in order to run it. Fast forward over a decade, and the game runs fairly easily on modern GPUs.

But can we also apply the same concept to pure CPU rendering? Can a CPU, on its own, render Crysis? Since 64 core processors entered the market, one can dream. So we built a benchmark to see whether the hardware can.

For this test, we’re running Crysis’ own GPU benchmark, but in CPU render mode. This is a 2000 frame test, with medium settings.

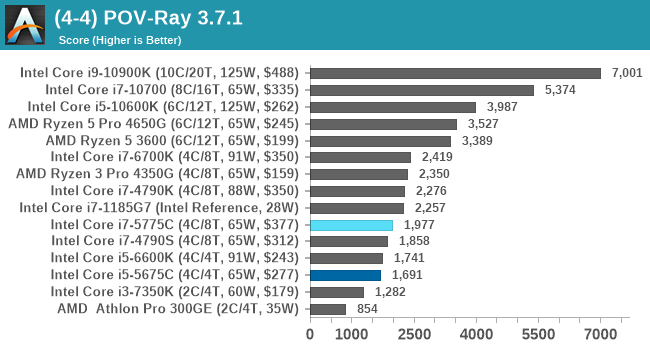

POV-Ray 3.7.1: Link

A long time benchmark staple, POV-Ray is another rendering program that is well known to load up every single thread in a system, regardless of cache and memory levels. After a long period of POV-Ray 3.7 being the latest official release, when AMD launched Ryzen the POV-Ray codebase suddenly saw a range of activity from both AMD and Intel, knowing that the software (with the built-in benchmark) would be an optimization tool for the hardware.

We had to stick a flag in the sand when it came to selecting the version that was fair to both AMD and Intel, and still relevant to end-users. Version 3.7.1 fixes a significant bug in the early 2017 code that was advised against in both Intel and AMD manuals regarding to write-after-read, leading to a nice performance boost.

The benchmark can take over 20 minutes on a slow system with few cores, or around a minute or two on a fast system, or seconds with a dual high-core count EPYC. Because POV-Ray draws a large amount of power and current, it is important to make sure the cooling is sufficient here and the system stays in its high-power state. Using a motherboard with a poor power-delivery and low airflow could create an issue that won’t be obvious in some CPU positioning if the power limit only causes a 100 MHz drop as it changes P-states.

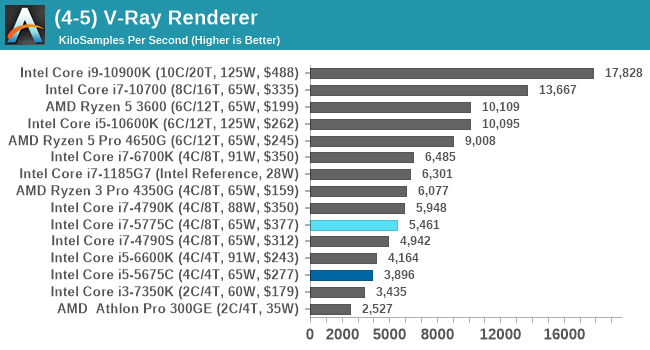

V-Ray: Link

We have a couple of renderers and ray tracers in our suite already, however V-Ray’s benchmark came through for a requested benchmark enough for us to roll it into our suite. Built by ChaosGroup, V-Ray is a 3D rendering package compatible with a number of popular commercial imaging applications, such as 3ds Max, Maya, Undreal, Cinema 4D, and Blender.

We run the standard standalone benchmark application, but in an automated fashion to pull out the result in the form of kilosamples/second. We run the test six times and take an average of the valid results.

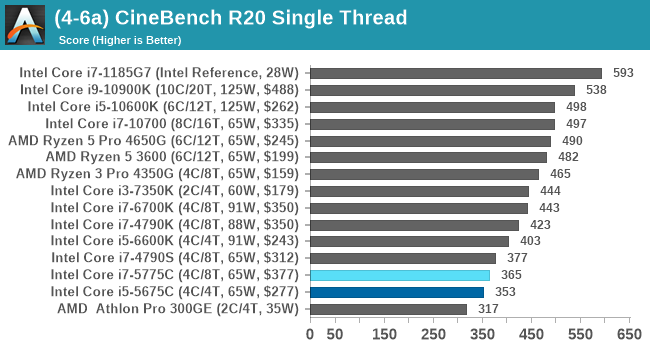

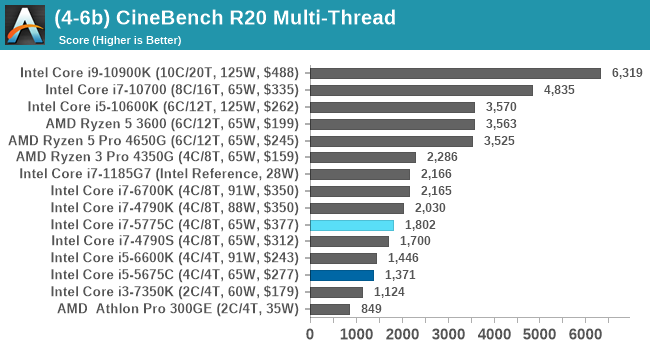

Cinebench R20: Link

Another common stable of a benchmark suite is Cinebench. Based on Cinema4D, Cinebench is a purpose built benchmark machine that renders a scene with both single and multi-threaded options. The scene is identical in both cases. The R20 version means that it targets Cinema 4D R20, a slightly older version of the software which is currently on version R21. Cinebench R20 was launched given that the R15 version had been out a long time, and despite the difference between the benchmark and the latest version of the software on which it is based, Cinebench results are often quoted a lot in marketing materials.

Results for Cinebench R20 are not comparable to R15 or older, because both the scene being used is different, but also the updates in the code bath. The results are output as a score from the software, which is directly proportional to the time taken. Using the benchmark flags for single CPU and multi-CPU workloads, we run the software from the command line which opens the test, runs it, and dumps the result into the console which is redirected to a text file. The test is repeated for a minimum of 10 minutes for both ST and MT, and then the runs averaged.

120 Comments

View All Comments

dsplover - Tuesday, November 3, 2020 - link

For Digital s Audio applications the i7-5775C @ 3.3GHz was incredible when disabling the Iris GFX turning the cache over to audio, then running s discrete GFX card.Bested my i7 4790k’s.

Tried OC’ing but even with the kick but Supermicro H70 it was unstable as the Ring Bus/L4 would also clock up and choked @ 2050MHz.

This rig allowed really tight low latency timings and I prayed they would release future designs with a larger cache.

AMD beat them to to it w/Matisse which was good for 8 core only.

The new 5000s are going to be Digital Audio dreams @ low wattage.

Intel just keeps lagging behind.

ironicom - Tuesday, November 3, 2020 - link

fps is irrelevant in civ; turn time and load time are what matter.vorsgren - Tuesday, November 3, 2020 - link

Thanks for using my benchmark! Hope it was usefull!Nictron - Wednesday, November 4, 2020 - link

Which benchmark was that?erotomania - Wednesday, November 4, 2020 - link

Google the username.vorsgren - Wednesday, November 4, 2020 - link

http://www.bay12forums.com/smf/index.php?topic=173...Oxford Guy - Thursday, November 5, 2020 - link

"The Intel skew on this site is getting silly its becoming an Intel promo machine!"Yes. An article that exposes how much Intel was able to get away with sandbagging because of our tech world's lack of adequate competition (seen in MANY tech areas to the point where it's more the norm than the exception) — clearly such an article is showing Intel in a good light.

If you were an Intel shareholder.

For everyone else (the majority of the readers), the article condemns Intel for intentionally hobbing Skylake's gaming performance. ArsTechnica produced an article about this five years ago when it became clear that Skylake wasn't going to have EDRAM.

The ridiculousness of the situation (how Intel got away with charging premium prices for horribly hobbled parts — $10 worth of EDRAM missing, no less) really shows the world's economic system particularly poorly. For all the alleged capitalism in tech, there certainly isn't much competition. That's why Intel didn't have to ship Skylake with EDRAM. Monopolization (and near-monopoly) enables companies to do what they want to do more than anything else: sell less for more. As long as regulators are toothless and/or incompetent the situation won't improve much.

erikvanvelzen - Saturday, November 7, 2020 - link

Ever since the Pentium 4 Extreme Edition I've wondered why intel does not permanently offer a top product with a large L3 or L4 cache.abufrejoval - Monday, November 9, 2020 - link

Just picked up a NUC8i7BEH last week (quad i7, 48EU GT3e with 128MB eDRAM), because they dropped below €300 including VAT: A pretty incredible value at that price point and extremely compatible with just about any software you can throw at it.Yes, Tiger Lake NUC11 would be better on paper and I have tried getting a Ryzen 7-4800U (as PN50-BBR748MD), but I've never heard of one actually shipped.

It's my second NUC8i7BEH, I had gotten another a month or two previously, while it was still at €450, but decided to swap that against a hexa-core NUC10i7FNH (24EU no eDRAM) at the same price, before the 14-days zero-cost return period was up. GT3e+quad-core vs. GT2+hexa-core was a tough call to make, but acutally both run really mostly server loads anyway. But at €300/quad vs €450/hexa the GT3e is quite simply for free, when the silicon die area for the GT3e/quad is in all likelyhood much greater than for the GT2/hexa, even without counting the eDRAM.

My Whiskey-lake has 200MHz less top clock than the Comet-lake, but that doesn't show in single core results, where the L4 seems to put Whiskey consistently into a small lead.

GT3e doesn't quite manage to double graphics performance over GT2, but I am not planning to use either for gaming. Both do fairly well at 4k on anything 2D, even Google Map's 3D renders do pretty well.

BTW: While Google Earth Pro's Flight simulator actually gives a fairly accurate representation of the area where I live, it doesn't do great on FPS, even with an Nvidia GPU. By contrast Microsoft latest and greatest is a huge disappointment when it comes to terrain accuracy (buildings are pure fantasy, not related at all to what's actually there), but delivers ok FPS on my RTX2080ti. No, I didn't try FlightSim on the NUCs...

However, the 3D rendering pipeline Google has put into the browser variant of Google Maps, beats the socks off both Google Earth Pro and Microsoft Flight: With Chrome leading over Firefox significantly, the 3D modelled environment is mind-boggling even on the GT2 at 4k resolutions, it's buttery smooth on GT3e. A browser based flight simulator might actually give the best experience overall, quite hard to believe in a way.

It has me appreciate how good even iGPU graphics could be, if code was properly tuned to make do with what's there.

And it exposes just how bad Microsoft Flight is with nothing but Bing map data unterneath: Those €120 were a full waste of money, but I just saved those from buying the second NUC8 later.

mrtunakarya - Wednesday, December 9, 2020 - link

<a href="https://www.mrtunakarya.com/?m=1">Nice<...