Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTGraphics Performance Comparison

With the background and context of the benchmark covered, we now dig into the data and see what we have to look forward to with DirectX 12 game performance. This benchmark has preconfigured batch files that will launch the utility at either 3840x2160 (4K) with settings at ultra, 1920x1080 (1080p) also on ultra, or 1280x720 (720p) with low settings more suited for integrated graphics environments.

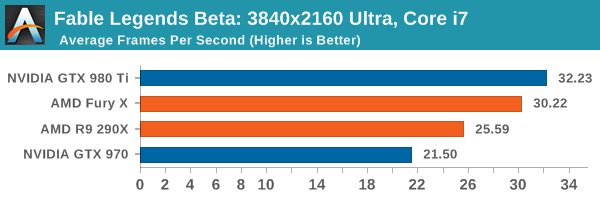

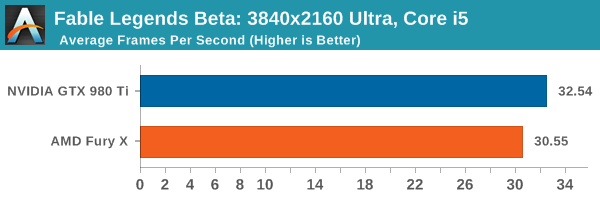

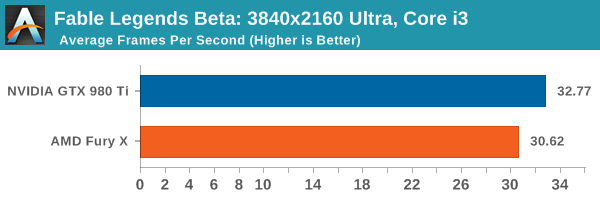

When dealing with 3840x2160 resolution, the GTX 980 Ti has a single digit percentage lead over the AMD Fury X, but both are above the bare minimum of 30 FPS no matter what the CPU.

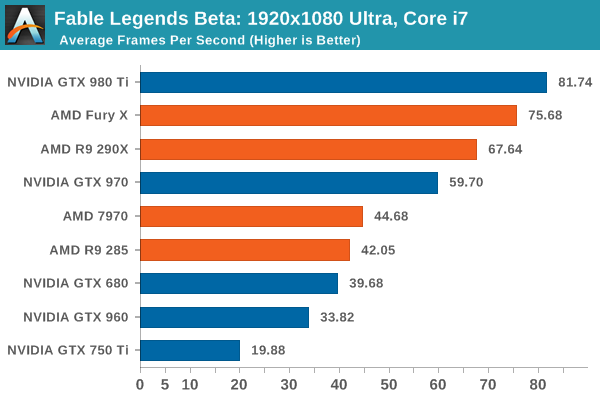

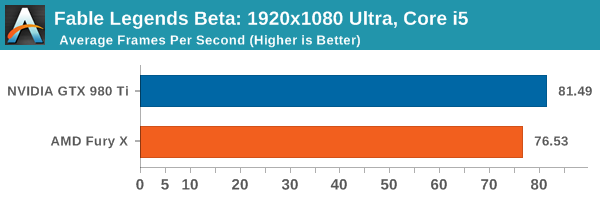

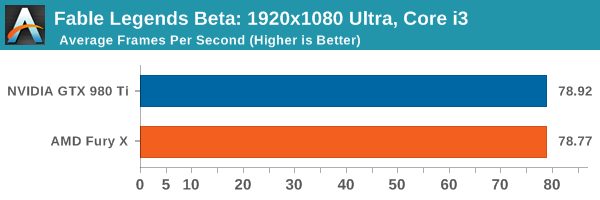

When dealing with the i5 and i7 at 1920x1080 ultra settings, the GTX 980 Ti still has that single digit percentage lead, but at Core i3 levels of CPU power the difference is next to zero, suggesting we are CPU limited even though the frame difference from i3 to i5 is minimal. If we look at the range of cards under the Core i7 at this point, the interesting thing here is that the GTX 970 just about hits that 60 FPS mark, while some of the older generation cards (7970/GTX 680) would require compromises in the settings to push it over the 60 FPS barrier at this resolution. The GTX 750 Ti doesn’t come anywhere close, suggesting that this game (under these settings) is targeting upper mainstream to lower high end cards. It would be interesting to see if there is an overriding game setting that ends up crippling this level of GPU.

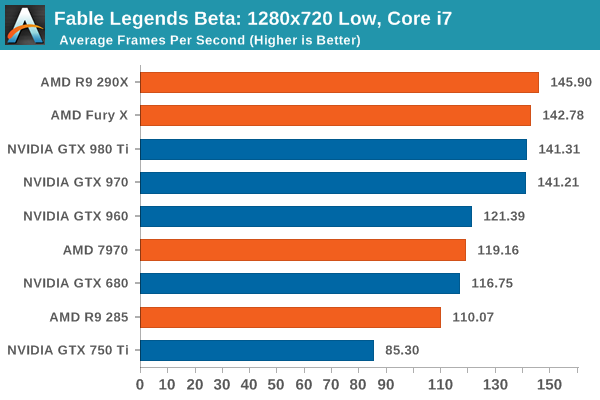

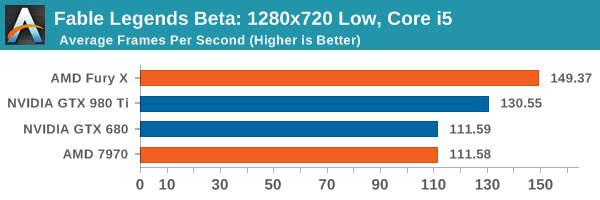

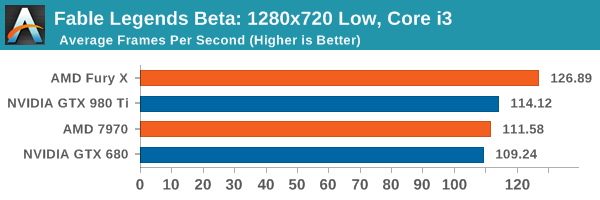

At the 720p low settings, the Core i7 pushes everything above 60 FPS, but you need at least an AMD 7970/GTX 960 to start going for 120 FPS if only for high refresh rate panels. We are likely being held back by CPU performance as illustrated by the GTX 970 and GTX 980 Ti being practically tied and the R9 290X stepping ahead of the pack. This makes it interesting when we consider integrated graphics, which we might test for a later article. It is worth noting that at the low resolution, the R9 290X and Fury X pull out a minor lead over the NVIDIA cards. The Fury X expands this lead with the i5 and i3 configurations, just rolling over to the double digit percentage gains.

141 Comments

View All Comments

Traciatim - Thursday, September 24, 2015 - link

RAM generally has very little to no impact on gaming except for a few strange cases (like F1).Though, the machine still has it's cache available so the i3 test isn't quite the same thing as a real i3 it should be close enough that you wouldn't notice the difference.

Mr Perfect - Thursday, September 24, 2015 - link

In the future, could you please include/simulate a 4 core/8 thread CPU? That's probably what most of us have.Oxford Guy - Thursday, September 24, 2015 - link

How about Ashes running on a Fury and a 4.5 GHz FX CPU.Oxford Guy - Thursday, September 24, 2015 - link

and a 290X, of course, paired against a 980vision33r - Thursday, September 24, 2015 - link

Just because a game supports DX12 doesn't mean it uses all DX12 features. It looks like they have DX12 as a check box but not really utilizing DX12 complete features. We have to see more DX12 implemenations to know for sure how each card stack up.Wolfpup - Thursday, September 24, 2015 - link

I'd be curious about a direct X 12 vs 11 test at some point.Regarding Fable Legends, WOW am I disappointed by what it is. I shouldn't be in a sense, I mean I'm not complaining that Mario Baseball isn't a Mario game, but still, a "free" to play deathmatch type game isn't what I want and isn't what I think of with Fable (Even if, again, really this could be good for people who want it, and not a bad use of the license).

Just please don't make a sequel to New Vegas or Mass Effect or Bioshock that's deathmatch LOL

toyotabedzrock - Thursday, September 24, 2015 - link

You should have used the new driver given you where told it was related to this specific game preview.Shellshocked - Thursday, September 24, 2015 - link

Does this benchmark use Async compute?Spencer Andersen - Thursday, September 24, 2015 - link

Negative, Unreal Engine does NOT use Async compute except on Xbox One. Considering that is one of the main features of the newer APIs, what does that tell you? Nvidia+Unreal Engine=BFF But I don't see it as a big deal considering that Frostbite and likely other engines already have most if not all DX12 features built in including Async compute.Great article guys, looking forward to more DX12 benchmarks. It's an interesting time in gaming to say the least!

oyabun - Thursday, September 24, 2015 - link

There is something wrong with the webpages of the article, an ad by Samsung seems to cover the entire page and messes up all the rendering. Furthermore wherever I click a new tab opens at www.space.com! I had to reload several times just to be able to post this!