The ASRock X99 Extreme11 Review: Eighteen SATA Ports with Haswell-E

by Ian Cutress on March 11, 2015 8:00 AM EST- Posted in

- Motherboards

- Storage

- ASRock

- X99

- LGA2011-3

If there is one thing I like about ASRock, it is their ability to do something different in an increasingly difficult market to differentiate. One of these elements is the Extreme11 series, using an LSI RAID controller to provide more SAS/SATA ports on the high end model. Today we have the X99 Extreme11 in for review.

ASRock X99 Extreme11 Overview

Our last review of an Extreme11 model was back in the X79 era, featuring the six SATA ports from the PCH and eight from the bundled LSI 3008 onboard controller. Our sample back then used eight PCIe lanes for the controller and achieved 4 GBps maximum read and write sequential speeds when using an eight drive SSD RAID-0 SF-2281 array. Between the X79 and the X99 model came the Z87 Extreme11/ac which used the same LSI controller but bundled it with a port multiplier, giving sixteen SAS/SATA ports plus the six from the chipset for 22 total. When we come to the X99 Extreme11 in this review, we get the same 3008 controller without the multiplier) which adds eight ports to the ten from the PCH, giving eighteen in total.

One of the criticisms from the range is the lack of useful hardware RAID modes with the LSI 3008. It only gives RAID 0 and 1 (also 1E and 10) with no scope for RAID 5/6. This is partly because the controller comes without any cache (or albeit a very small one) which cannot help with managing such an array. ASRock's line on this is partly due to controller cost and complexity of implementation, suggesting that users who require these modes should use a software RAID solution. Users who want a hardware solution will have to buy a controller card that supports it, and ASRock is keen to point out that the Extreme11 range has plenty of PCIe bandwidth to handle it.

The amount of PCIe bandwidth brings up another interesting element to the Extreme11 range. ASRock feels that their high end motherboard range must support four-way GPU configurations, preferably in x16/16/16/16 lane allocation. In order to do this, along with having enough lanes for the LSI 3008 controller that needs eight, for the X99 Extreme11 there are two PLX8747 PCIe switches on board. We covered the PLX8747 during its prominent use during Z77, but a base summary is that due in part to its FIFO buffer it can multiplex 8 or 16 PCIe lanes into 32. Thus for the X99 Extreme11 and its dual PLX8747 arrangement, each PLX switch takes 16 lanes from the CPU to give two PCIe 3.0 x16 slots, totaling four PCIe 3.0 x16 slots overall. The final eight lanes from the CPU go to the LSI controller, accounting for 40 lanes from the processor. (28 lane CPUs behave a little differently, see the review below.)

As you might imagine, two PLX8747 switches and an LSI controller onboard does not come cheap, and that is why the Extreme11 is one of the most expensive X99 Motherboards on the market at $630+, only to be bested in this competition by the ASRock X99 WS-E/10G which comes with a dual port 10GBase-T controller for $670. Aside from the four PCIe 3.0 x16 slots and 18 SATA ports, the Extreme11 also comes with support for 128GB of RDIMMs, LGA2011-3 Xeon compatibility, dual Intel network ports, upgraded audio and dual PCIe 3.0 x4 M.2 slots. The market ASRock aims for with this board needs high storage and compute requirements in their workstation - typically with these builds the motherboard cost is not that important, but the feature set is. That makes the X99 Extreme11 an entertaining product in an interesting market segment.

Visual Inspection

With the extra SATA ports and controller chips onboard, the Extreme11 expands into the EATX form factor, which means an extra inch or so horizontally for motherboard dimensions. Aside from the big block of SATA ports, nothing looks untoward on the board, giving an extended heatsink around the power delivery down to the chipset heatsink which has an added fan to deal with the two PLX8747 chips and the LSI 3008 controller.

The socket area is fairly crammed up to Intel’s specifications, with ASRock’s Super Alloy based power delivery packing in twelve phases in an example of over engineering. The DRAM slots are color coded for the black slots to be occupied first. Within the socket area there are four fan headers to use – two CPU headers in the top right (4-pin and 3-pin), a 3-pin header just below the bottom left of the socket (above the PCIe slot) and another 3-pin near the top of the SATA ports. The other two fan headers at the bottom of the board are one 4-pin and another 3-pin, with the final fan header provided for the chipset fan. This can be disabled if required by removing the cable.

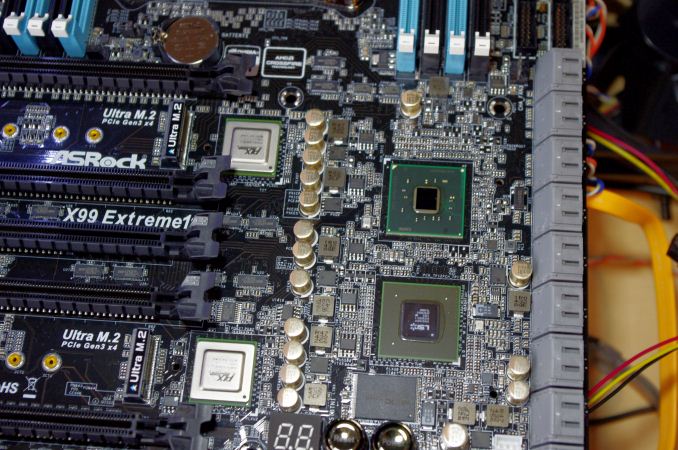

The bottom right of the motherboard next to the SATA ports and under the chipset heatsink hides the important and costly controller chips. Combining the two PLX8747 on the left, the LSI RAID controller and the chipset comes north of 30W in total for power use, hence the extra fan on the chipset.

Each PLX8747 PCIe switch can take in eight or sixteen PCIe 2.0 or PCIe 3.0 lanes, then by using a combination of a FIFO buffer and multiplexing output 32 PCIe 3.0 lanes. Sometimes this sounds like magic, but it is best to think of it as a switching FPGA – between the PCIe slots, we have full PCIe 3.0 x16 bandwidth, but if we go up the pipe back to the CPU, we are still limited by that 8/16 lane input. The benefit of the FIFO buffer is a fill twice/pour once scenario, coalescing commands and shooting them up the data path together rather than performing a one in/one out. In our previous testing the PLX8747 gave a sub 1% performance deficit in gaming, but aids compute users that need inter-GPU bandwidth. It also surpasses the SLI fixed limitation of needing eight PCIe lanes, ensuring that the NVIDIA configurations are happy.

The LSI 3008 is a little long in the tooth having been on the X79 and Z87 Extreme11 products, but it does what ASRock wants it to do – provide extra storage ports for those that need it. In order to get a case that can support 18 drives is another matter – we often see companies like Lian Li do them at Computex, and some cost as much as the motherboard. The next cost is all the drives, but I probably would not say no to an 18*6 TB system. The lack of RAID 5/6 for redundancy offerings is still a limitation, as is the lack of a cache. Moving up the LSI stack to a controller that does offer RAID 5/6 would add further cost to the product, and at this point ASRock has little competition in this space.

On the back of the motherboard is this interesting IC from Everspin, which turns out to be 1MB of cache for the LSI controller. There is scope for ASRock to put extra cache on the motherboard, allowing for higher up RAID controllers, but the cost/competition scenario falls into play again.

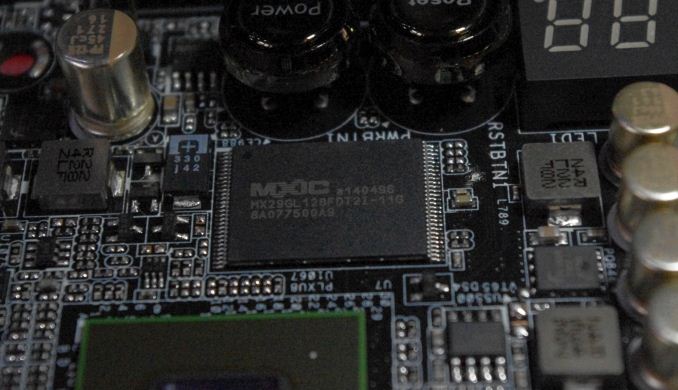

The final part of the RAID controller is this MXIC chip, which looks to be a 128Mbit flash memory IC with 110ns latency.

Aside from the fancier features, the motherboard has two USB 3.0 headers above the SATA ports (both from the PCH), power/reset buttons, a two digit debug display, two BIOS chips with a selector switch, two USB 2.0 headers, a COM header, and the usual front panel/audio headers. Bang in the middle of the board, between the PCIe slots and the DRAM slots, there is a 4-pin molex to provide extra power to the PCIe slots when multiple hungry GPUs are in play. There is also another power connector below the PCIe slots, but ASRock has told us that only one is needed to be occupied at any time. I have mentioned to ASRock that the molex connector is falling out of favor with PSU manufacturers and very few users actually need one in 2015, as well as the fact that these connectors are both in fairly awkward places. The response was that the molex is the easiest to apply (compared to SATA power or 6-pin PCIe power), and the one in the middle of the board is for users that have smaller cases. I have a feeling that ASRock won’t shift much on this design philosophy unless they develop a custom connector.

The PCIe slots give x16/x16/x16/x16, with the middle slot using eight PCIe 3.0 lanes when in use causing the slot underneath to split causing an x8/x8 arrangement. With sufficiently sized cards, this gives five cards in total possible. Normally we see the potential for a seven card setup, but ASRock has decided to implement two PCIe 3.0 x4 M.2 slots in-between a couple of the PCIe slots. The bandwidth for these slots comes from the CPUs PCIe lanes, and thus do not get hardware RAID capabilities. However, given the PM951 is about to be released, two of them in a software RAID for 2800 MBps+ sequentials along with an 18*6 TB setup would be a super storage platform.

For users wanting to purchase the 28-lane i7-5820K for this motherboard, the PCIe allocation is a little harder to explain. The CPU gives 8 lanes each to the PLX controllers, giving a full x16/x16/x16/x16 solution still applies, with another 8 lanes for the LSI controller. The first M.2 x4 port gets the last four lanes and the second M.2 slot is disabled.

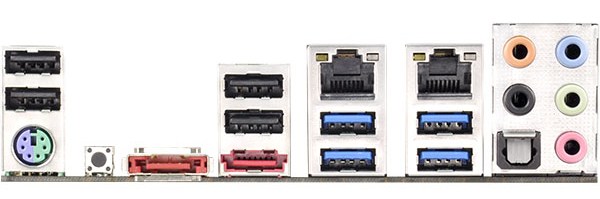

The rear panel gives four USB 2.0 ports, a combination PS/2 port, a Clear CMOS button, two eSATA ports, two USB 3.0 from the PCH, two USB 3.0 from an ASMedia controller, an Intel I211-AT network port, an Intel I218-V network port and audio jacks from the Realtek ALC1150 audio codec.

Board Features

| ASRock X99 Extreme11 | |

| Price | US |

| Size | E-ATX |

| CPU Interface | LGA2011-3 |

| Chipset | Intel X99 |

| Memory Slots | Eight DDR4 DIMM slots, up to Quad Channel 1600-3200 MHz Supporting up to 64 GB UDIMM Supporting up to 128 GB RDIMM |

| Video Outputs | None |

| Network Connectivity | Intel I211-AT Intel I218-V |

| Onboard Audio | Realtek ALC1150 (via Purity Sound 2) |

| Expansion Slots | 4 x PCIe 3.0 x16 1 x PCIe 3.0 x8 |

| Onboard Storage | 6 x SATA 6 Gbps, RAID 0/1/5/10 4 x S_SATA 6 Gbps, no RAID 8 x SAS 12 Gbps/SATA 6 Gbps via LSI 3008 2 x PCIe 3.0 x4 M.2 up to 22110 |

| USB 3.0 | 6 x USB 3.0 via PCH (2 headers, 2 rear ports) 2 x USB 3.0 via ASMedia ASM1042 (2 rear ports) |

| Onboard | 18 x SATA 6 Gbps Ports 2 x USB 3.0 Headers 2 x USB 2.0 Headers 7 x Fan Headers HDD Saver Header Front Panel Audio Header Front Panel Header Power/Reset Buttons Two-Digit Debug LED BIOS Selection Switch COM Header |

| Power Connectors | 1 x 24-pin ATX 1 x 8-pin CPU 2 x Molex for PCIe |

| Fan Headers | 2 x CPU (4-pin, 3-pin) 3 x CHA (4-pin, 2 x 3-pin) 1 x PWR (3-pin) 1 x SB (3-pin) |

| IO Panel | 1 x PS/2 Combination Port 2 x eSATA Ports 4 x USB 2.0 2 x USB 3.0 via PCH 2 x USB 3.0 via ASMedia 1 x Intel I211-AT Network Port 1 x Intel I218-V Network Port Clear CMOS Button Audio Jacks |

| Warranty Period | 3 Years |

| Product Page | Link |

58 Comments

View All Comments

duploxxx - Wednesday, March 11, 2015 - link

board to differentiate with 18 ports, but anandtech does not test the performance of each type of port. then why bother posting this review? waste of time, for the rest this is just another board out of the 101Gnarr - Wednesday, March 11, 2015 - link

I have to agree with duploxxx. This board seriously needs a storage benchmark.petar_b - Friday, January 29, 2016 - link

no, board doesn't need storage benchmark, you lack some experience with SAS.dicobalt - Wednesday, March 11, 2015 - link

This board is for people who play games and happen to have a buttload of porn. Don't act like it's for anything else.niva - Tuesday, March 17, 2015 - link

This is exactly why we are extremely interested in this board. Is there a problem?petar_b - Friday, January 29, 2016 - link

Get at a TV and watch porn there; you can't afford this mobo anyway.austinsguitar - Thursday, March 12, 2015 - link

I will side with you duploxx... there is no reason to buy this board except to get those sata ports.... why in the HELL is this without that kind of test... anandtech.... what are you doin...Tchamber - Friday, March 13, 2015 - link

Yeah, that's much too harsh. Any one who has followed SSD/SATA on this site for the last three or so years knows that SATA is already saturated. There's no longer any reason to test a board's storage performance.abufrejoval - Thursday, March 12, 2015 - link

I believe that’s a little harsh!With the information you have been provided on this site, you can use your own powers of deduction to come up with answers.

To expect that Ian go through all the potential permutations and variants is a little much, especially when the technical limitations are clear and testing software RAIDs is beyond the scope of the article.

With everything south of the DMI passing through the equivalent of 4 PCIe 2.0 or lanes or 16Gbit/s of bandwidth, you can deduce that 10x 6Gbit SATA ports won't deliver 60Gbit/s to the CPU, especially with network, USB and all other peripheral traffic hanging in there as well.

So if you hang SSDs on all these PCH ports, that's because you like them quiet or with fast access times, not because you expect their aggregate bandwidth to arrive at the CPU.

Beyond the limits of the DMI I doubt you'll see any significant bottleneck inside the PCH so you can do your math: Any single 6Gbit SATA drive capable of delivering 6Gbit of data will very likely have that data actually arrive at that speed at the CPU. Any combination of SATA drives on the PCH will be bandwidth constrained at 16Gbit.

The Avago/LSI 3008 at 8x PCIe 3.0 (63Gbit/s) has a pretty good chance to deliver top 8-port SATA (48Gbit/s) performance without creating much of a bottleneck, while 8x12GBit SAS (96Gbit/s) would potentially fail to deliver with that chip. On the other hand LSI chips typically deliver top performance, that is very close to the theoretical maximum the connections allow, even with RAID5 and RAID6 on the chip.

So there you go: The Avago/LSI SAS HBA has a very good chance of delivering the aggregate bandwidth you expect even if loaded with top notch SSDs, while the 10Port PCH is most likely better used with spinning rust.

wyewye - Friday, March 13, 2015 - link

Abufrejoval, that's not a review, that's a butt-load of theoretical assumptions. Assumptions are the mother of fuckups. In practice you may discover different numbers, hence we read reviews online before buying.Stop apologizing for Ian's incompetence/lazyness!